It was created with this purpose: web page server

Now Nginx is much more than that

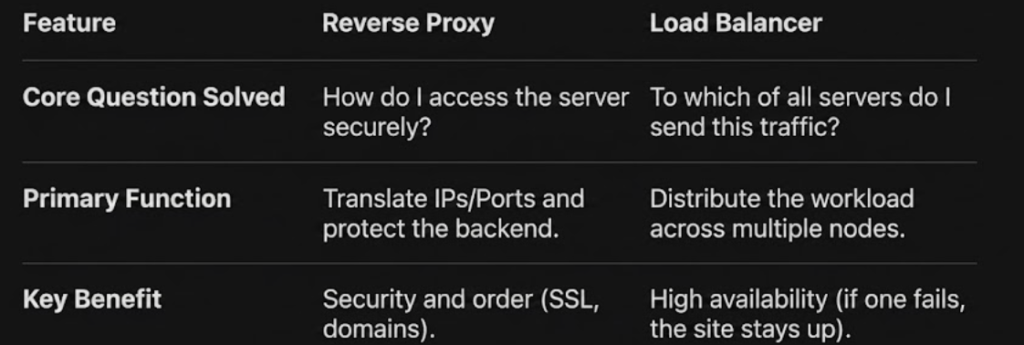

Today it is mainly used as a Reverse Proxy and Load Balancer due to its high efficiency

utilities:

- Web Server: Delivers content directly to the user.

- Reverse Proxy: Receives user requests and redirects them to other servers (such as a Spring Boot application or a Docker container)

What is a Load Balancer?

is a distribuitor of traffic

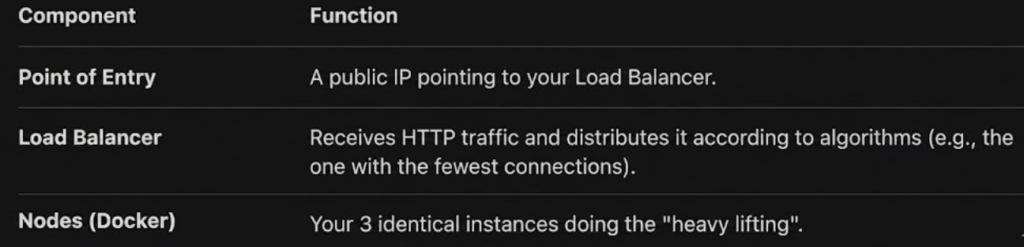

The purpose of a Load Balancer is scalability. It ensures that no server is overworked while others are idle

- Focus: Performance and availability. If one server goes down, the load balancer redirects traffic to the remaining servers

- Relationship: One-to-many (one load balancer for multiple servers performing the same function)

Imagine you have so much traffic that a single container for your app can’t handle it. You launch three identical containers. The Load Balancer (Nginx) receives all the visits and distributes them among these three containers so that none of them become overloaded.

Efficiency: The load balancer doesn’t “process” business logic (it doesn’t query databases or generate PDFs). It simply acts like a traffic cop directing cars. It’s orders of magnitude faster than Docker instances

Low resources: A single, well-configured Nginx can handle tens of thousands of simultaneous connections with very little RAM

What happens if the traffic is massive?

If your site grows so much that a single load balancer is not enough, infrastructure scaling techniques are applied:

- DNS-level load balancing: Multiple load balancers are configured, and the DNS server distributes requests among them (Round Robin DNS)

- IP Anycast: A single IP address is used, advertised from multiple data centers around the world. Traffic is routed to the load balancer closest to the user

- Dedicated hardware: In large enterprises, specialized physical equipment (such as F5) designed specifically to handle terabits of data is used

By the time the load balancer becomes overloaded, you would normally have needed hundreds of Docker instances, not just three!

If you have an application running on port 3000 (for example, a dashboard or an API) and you want the world to access it via a nice URL with SSL, you use a server block configuration

server {

listen 80;

server_name subdomain.domaindns.com;

location /newpathendpoint {

proxy_pass http://localhost:3000; # Redirect traffic at port 3000

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

}

}Security: Nginx acts as a shield. You don’t expose the internal ports of your containers or applications

SSL/TLS: It is much easier to configure a certificate (like Let’s Encrypt) in Nginx than within each Java or Node application

Flexibility: You can have many apps on different ports (3000, 4000, 5000) and have them all exit through port 80 or 443 with different routes or subdomains

Reverse Proxy

The purpose of a reverse proxy is intermediation. It ensures that the client never communicates directly with the real server

- Focus: Security, server anonymity, and name management (such as changing port 3000 to domain.com)

- Relationship: Generally one-to-one (a proxy for an application server)

- If you configure Nginx to point server.axxelin.com to your app on port 3000, you are using its Reverse Proxy feature

- If tomorrow your app has so much traffic that you need to open it on ports 3000, 3001 and 3002, and you tell Nginx to distribute the traffic among the three, you are using its Load Balancer function

The Reverse Proxy is the role (being in the middle), and the Load Balancer is a skill that this proxy can have to handle many servers at once